The "Free" AI Trap: You’re Accidentally Training Your Competitors

You’ve seen the "Free" version. Your accountant is using it to summarize spreadsheets. Your marketing person is using it to write LinkedIn posts. Even your nephew is probably using it to get out of writing a history paper.

It’s fast. It’s easy. And it’s free.

But here’s the universal truth we’ve learned from decades in the IT trenches: If you aren't paying for the product, you are the product. When you use the free version of a tool like ChatGPT, Claude, or Gemini, you aren't paying with cash. You’re paying with your company’s data. And here’s the part your IT guy isn't telling you: You might be accidentally giving your "Secret Sauce" to your biggest competitor.

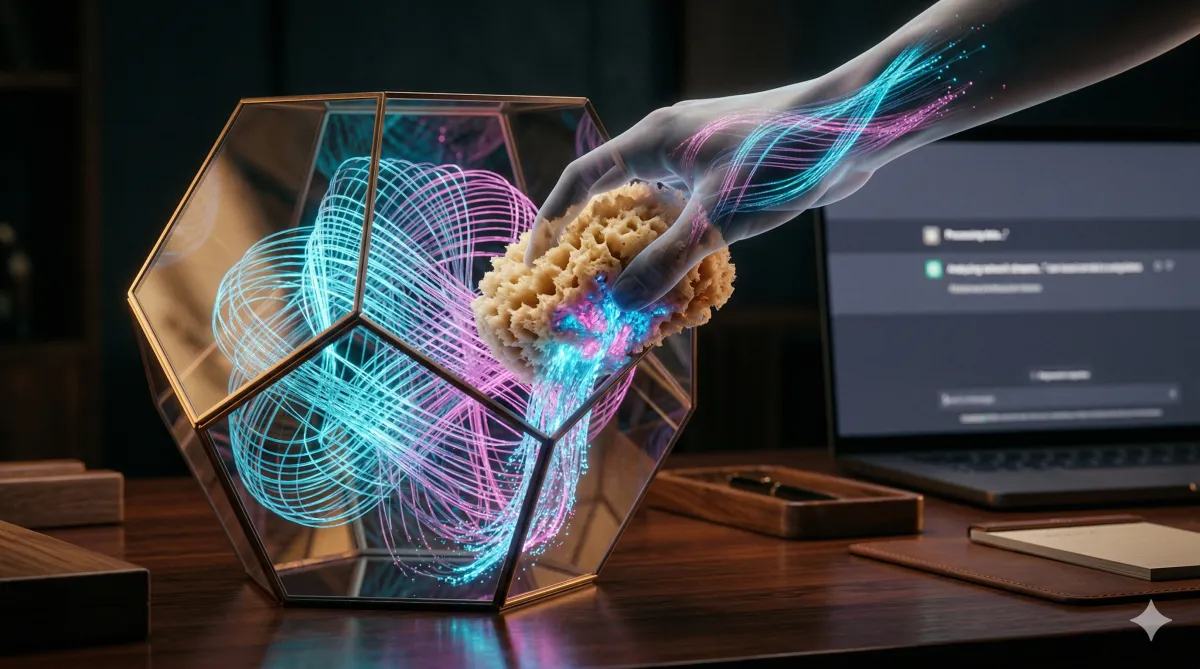

The "Training Sponge" (and the Competitive Leak)

Think of a public AI tool as a giant, digital sponge. Every time you type a prompt, you’re pouring liquid into that sponge.

The AI doesn't just "read" your prompt to give you an answer; it absorbs it. It uses your data to train its next version. Now, imagine your rival company down the street asks that same AI for a "marketing strategy for a mid-sized business in Dallas."

If you’ve been feeding the AI your client lists, pricing models, or proprietary logic, there is a real, scary possibility that the AI will use your "wisdom" to help your competitor win. According to Cyberhaven’s data security report, about 11% of the data employees paste into ChatGPT is sensitive.

The Samsung Warning Shot (Real Talk)

Don't think this is just a "what if" scenario. It’s already happened to the big guys.

A while back, engineers at Samsung accidentally leaked top-secret source code because they were using ChatGPT to help fix bugs. They thought they were being "efficient." Instead, they were handing their proprietary intellectual property to a third-party company for free.

If it can happen to a multi-billion dollar tech giant with thousands of security experts, it can definitely happen to your office in Plano.

The "Privacy Silo" (Why the $20-30/mo is Worth It)

The difference between "Free AI" and "Enterprise AI" isn't just a few extra features or a fancy logo. It’s the Privacy Silo.

When you pay for tools like Microsoft 365 Copilot or ChatGPT Enterprise, you are buying a "Private Instance."

The "No-Training" Rule: Your data stays in your house. The AI is legally barred from using your prompts to train the public model.

The Compliance Lock: These tools meet SOC 2 and HIPAA standards, meaning your client data stays as safe as it is in your encrypted server.

The Texas Tech-Sage Truth: It’s cheaper to pay $30 a month for a secure license than to pay a lawyer $300 an hour to deal with a data breach.

How to Wring Out the Sponge

You don't have to ban AI. That’s like trying to ban the internet in 1995—you’re just going to make your team hide it from you. Instead, do this:

Kill the Free Accounts: If a tool is being used for work, it needs to be an Enterprise account. Period. No exceptions.

Audit the "Shadow AI": Use a tool (or a company like us) to see what’s actually leaving your network. As we mentioned in our Meta AI Safety breakdown, your team is likely using more AI than they’re telling you.

Deploy the Guardrails: Your team needs to know exactly what is "Safe" vs. "Suicidal" to upload.

We’ve already done the heavy lifting for you. We created a one-page AI Ground Rules template. It’s written in plain English, it fits on one page, and it tells your team exactly how to use these tools without wrecking the company.

Grab Your Copy of the AI Ground Rules Template